How to get your Twitter followers in Excel for…

A couple days ago I needed getting a complete list of people following a Twitter account and I couldn’t find a quick solution.

First of all, I tried Scraper for Chrome, but since Twitter uses infinite scroll, on a very long list (mine is not) it would have needed too much time and steps.

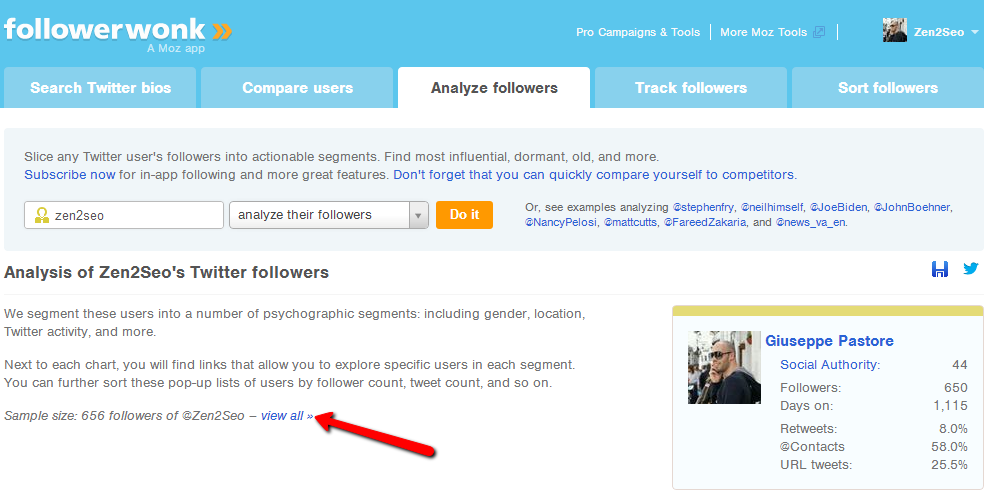

I checked Followerwonk, and even if I could check the list I couldn’t export it without a Pro plan.

I thought of building an Excel Scraper using Twitter API, but I discovered information isn’t available easily since v1.1 requires authentication to send GET requests from a client. And I didn’t want to build an app (nor I’m able to do it, probably).

So I had to do put a few things together to achieve my goal.

At the end of the day I built a little Excel file that basically scrapes Followerwonk. This is not that great, I know, but the little detail to highlight is it handles paginated results without requiring any further inputs besides the first page URL.

Let me explain…

If you login in Followerwonk, you have the chance to check all your followers/followings but you can’t download them if you’re not a Pro user.

Even if I endorse Followerwonk for its analysis capabilities, I just needed my followers in a useable form: too little to start a paid plan. So I checked if you could scrape the list using SEO tools for Excel.

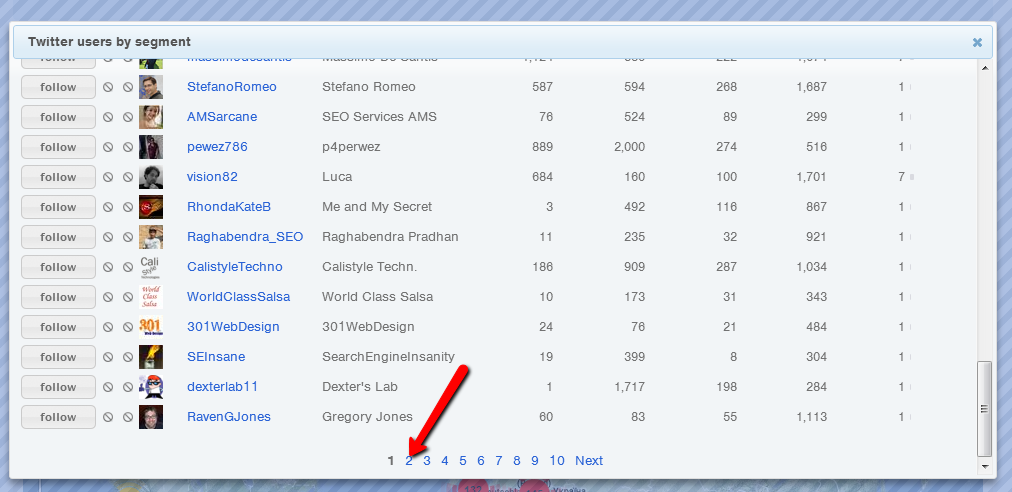

A quick look to the paginations shows content is served via javascript and paginated (50 per page) but results are loaded using a session parameter and a page one (ie. https://followerwonk.com/?session=slcdb.203513782.422017.fl.db&track_p=2).

This means the list can be scraped, given you correctly handle the pagination.

I know you want the bacon, so first of all this is what you need:

=XPathOnUrl(Sheet2!$B$1&IF(MOD(A1;50)0;INT(A1/50)+1;INT(A1/50));

"//table/tbody/tr["&IF(MOD(A1;50)0;IF(INT(A1/50)>=1;A1-INT(A1/50)*50;A1);A$50)&"]/td/a";

"href")

And then, if you’re interested, a bit of explanations.

1) Sheet2!$B$1 is the cell in which you have the URL to scrape, without the page value (ie. https://followerwonk.com/?session=slcdb.203513782.422017.fl.db&track_p=).

2) A column contains numeric values from 1 to the number of your followers.

3) We append the page value to the URL with a simple check: MOD(A1;50) is different from zero when the current index is not a multiple of 50. In the true case, INT(A1/50) is 0 when we are in page 1; it’s 1 when we are on page 2; and so on. Then INT(A1/50)+1 is the page value. When we are at the row 50, 100, 150, etc we fall in the false case and we just get the value of INT(A1/50).

4) We use XpathOnURL on the built URL (you need SEO tools for Excel) to get the href attribute of the //table/tbody/tr[]/td/a/ element.

5) The tr[] index changes from 1 to 50. We check if MOD(A1;50) is different from 0 that means we are not at the last item of the current page. If false, we just need the 50th element (A$50); if true, we need to know count to 1 to 49 and then, after 50, restart from 1. We check if INT(A1/50)>=1, that means we are past the 50th row: in this case A101-INT(A101/50)*50=101-100=1. On the contrary, if we have not reached the 50th row, we just pick the current index (A51).

Et voilà: your list, for free.

Now, I hope to be in the list of people you follow.

And if you liked the post and it will come in handy, you can say thanks by linking it in your next one and sharing it via your social profiles and/or Inbound.org.

19 COMMENTS

Thanks for writing this up – very useful – I’ve actually been toying with Selenium and Excel for some basic scraping tasks you should check it out and I might even write about it if I get a chance.

Hi Chris,

thanks for the comment, I’m glad the post is useful. I’ll check Selenium, it looks interesting…

I would love to use this…any way you can make it a bit clearer?

I installed seo tools (tried the social button to test it and it was pulling in #s, so I am assuming it works), pasted the URL without page value into sheet2 B1, numbered column A in sheet1 for my # of followers, then pasted the formula into sheet1 B1, and get nothing…it doesn’t seem to be recognizing it as a formula.

What am I missing?

thanks!

Hi DrRJE,

it looks like I wrongly translated Italian Excel functions into English ones. I’ve edited the formula. Try the new one and let me know!

thanks for the response!

still not getting anything though…it’s giving me an error at the first instance of MOD(A1,50) — it highlights the A1.

any chance we could get a youtube video of you running one?

🙂

it’s MOD(A1;50), with the semicolon…

yeah…its a semicolon in the formula — although changing it to a comma moves the error on to the 0 after the

with the semicolon there, the error is on A1.

Here are the steps I took:

1. Install SeoTools.

2. in Sheet 2, paste the URL from followerwonk into B1 — no page at the end of url, so it ends with an =

3. Numbered Sheet 1 Column A for the # of followers

4. in Sheet 1 B1, pasted the formula (with the semicolon) and hit enter.

5. read the error 🙂

Thanks so much for your help. I paid for the trial and it seems to be capped at 50k, so this would help a lot.

Uhm, it’s strange. Is it Sheet2 the name of the second Sheet? Maybe send me your Excel at info[at]posizionamentozen.com and I’ll check it 🙂

that’d be great…looks like your mailbox is over quota at the moment though 😉

Just replied: consider the Followerwonk URL expires, so you have to get the new one each time you use the excel…

working great now 🙂

thank you!

ciao giuseppe, volevo dirti che ho seguito alla lettera i passi (nn capisco benissimo l’inglese) ma nn sembra succedere niente, nn m si carica nessuna lista. cosa dovrei fare per risolvere??

Ciao Alberto,

le funzioni Excel devono essere in italiano nel tuo caso.

I have tried and its working perfectly, but what i want is to prepared a sheet for other twitter accounts as well. as i don’t have login credentials for others account but i want their followers, in that scenario what do i do please guide

if it makes you easy :: i don’t want my followers i want Google’s followers in excel sheet. is it possible

You can apply it on any profile you want to.

Hi, Interesting article! I just want to share this startup with you. They can actually help you to with scraping Twitter bit.ly ScrapeTwitter

[…] you’re not afraid to do a little exploitation and break a few terms of service, you can use this workaround to hijack their data, specifically by circumventing the scripts that limit you. As long as they […]

Hey Giuseppe Pastore, can you please make a video on it, explaining all the information given in above article, since I’m facing some problem as being new to seo tool for excel.

Thanks in advance.

[…] FriendOrFollow seems to have stopped existing. RIP FriendOrFollow! We however came across the following post to do a similar thing using Followerwonk. It’s a post from 2013, but it still works at the […]